The Art and Science of Hybrid Images

When we discover this type of images, we tell ourselves that our naive little brain is playing another trick on us. What magic is behind this masquerade? Someone’s trying to trick us!

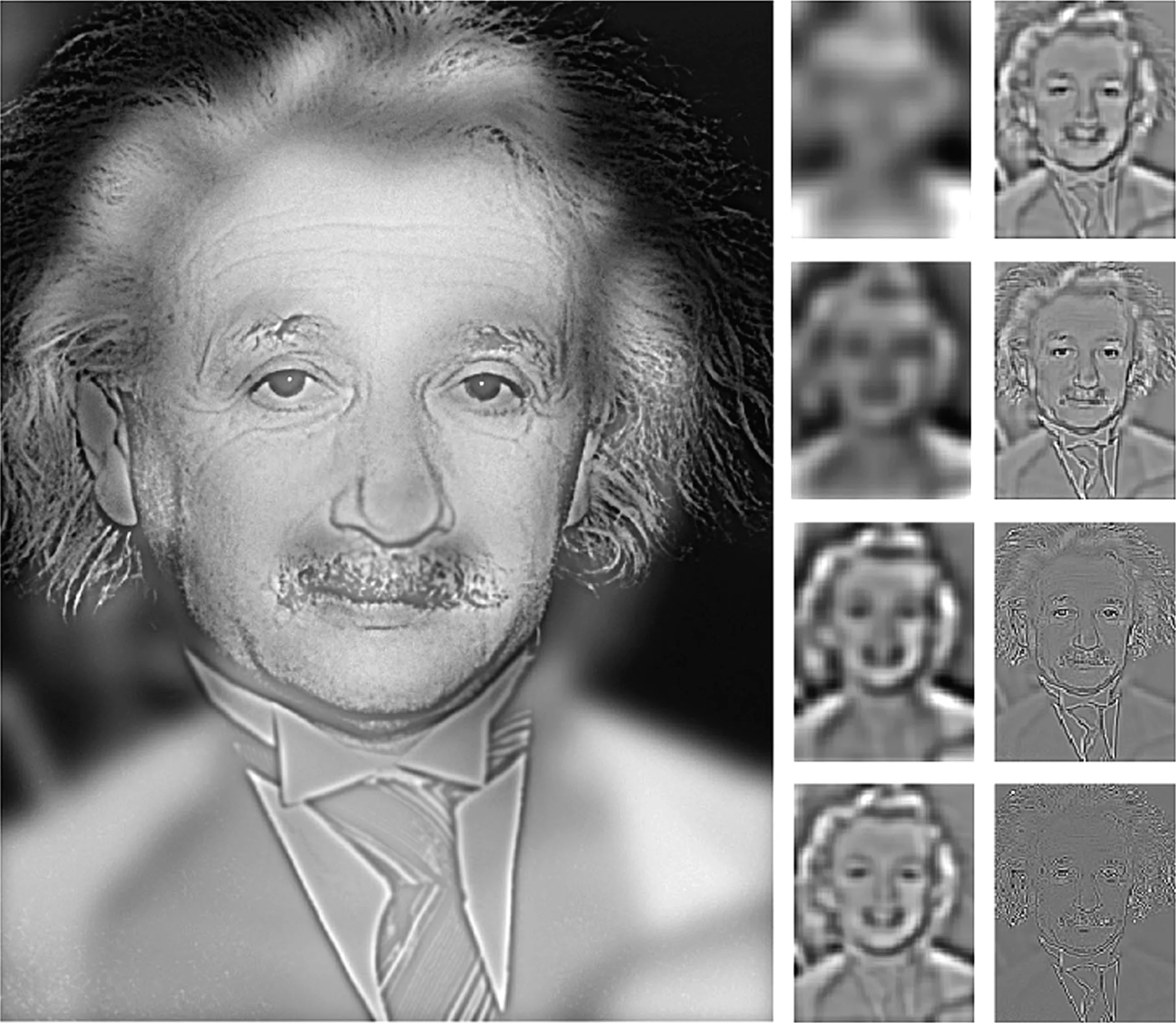

It often starts with Marilyn Monroe. Looking at the image from several meters away, we are positive, it is indeed Marilyn. But, a few seconds later, by bringing our gaze closer, this judgement is to be reviewed and Albert Einstein seems to be laughing at us!

We are going to tell you how these images are fascinating, and how they can help us to better understand our “gaze”.

Visuospatial resonance

The visual phenomenon behind hybrid images is called “visuospatial resonance”. We are in the field of neuroscience, and the first discoveries date back to the 1970s. The question was to understand how the brain analyzes an image.

On the one hand there are the visual stimuli provided by the eyes, and on the other hand there is the memory of the observer. In concrete terms, everything happens in less than 0.1 seconds. Yet it is a relatively laborious iterative process of comparison, which starts with a rough vision then detail after detail will be able to arrive at the precise interpretation of the object looked at.

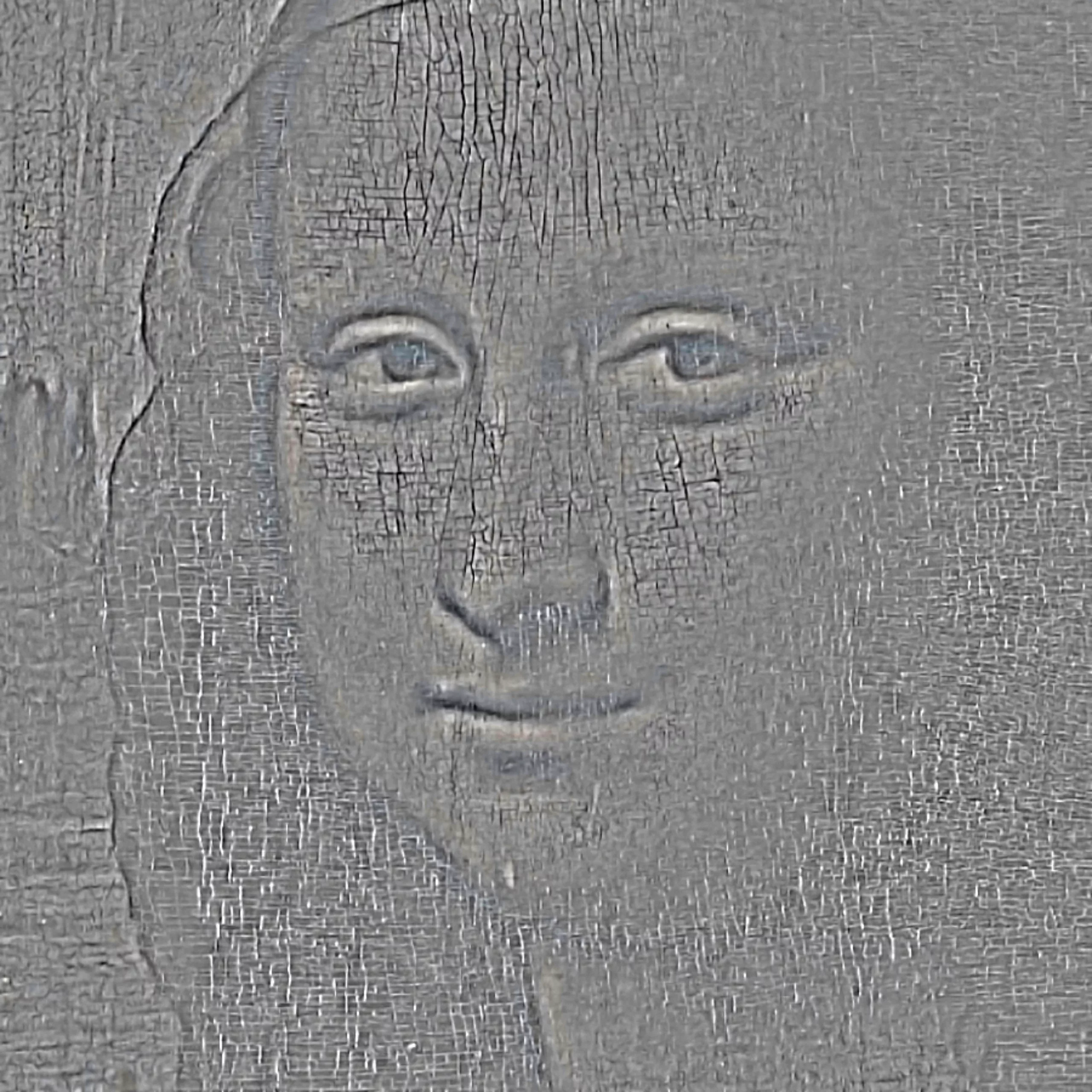

Obviously, a quality view will be a big advantage. I can guarantee that without glasses, you will not recognize much in less than 0.15 sec. To qualify the sharpness of the image, we talk about frequency. High-frequency images will be images with very sharp edges, with little detail. We see them very well up close, but after a few meters they disappear in our eyes. Conversely, low-frequency images will appear blurred at close range, but sharp from a distance. It is by combining these two images (cf: resonance) that we can produce these famous hybrid images.

But why does the eye work this way? I, who thought our brain was a lazy one, why does it take care to look at two visuospatial areas at the same time? Couldn’t it have found a more economical way?

The eye: “Hello brain, I’m sending you an HD picture, I don’t know what it is, but figure it out…”

The brain: “Ok, wait 30 seconds, it’s downloading…”

The eye: “Go ahead and hurry ! Maybe it’s a tiger !”

The brain: “Hey easy, I’m still 56kpbs… OK, it’s OK, it’s a cat!”

You will have understood it, this dialogue is pure fiction, and any resemblance with a scene that has already existed would only be fortuitous. But there’s a lot of truth in this exchange. In fact, if all the information were to pass between the eye and the brain at once, we would have big problems of cognitive saturation. To do this, nature has given us two receiving channels, each with a different bit rate.

That’s where the iterations begin. The low frequencies are sent to the brain very quickly, to get the first feedback. This “coarse” visual information would allow a first recognition of the information. You can experience these “low frequencies” yourself, since they are the “blurred” areas at the edges of our field of vision. When the tiger surreptitiously enters your living room, when it sneaks in between the TV and the sofa while you were concentrated on reading this article, it is the low frequencies that will save your life, even before you saw that it was a cat. At that point, the stopwatch is at about .08 seconds.

The brain: “Go ahead and raise your head, I’m not sure that the moving red spot isn’t a tiger! I’d like to check.”

At this point, the brain can then ask the eye to look more closely. The whole body is on alert. The high frequencies will come into play to confirm or reject the coarse image recognition.

The brain: “Can we stop the stopwatch? Would I like to know my performance?”

There’s nothing to be afraid of, it was a cat. The stopwatch reads 0.15 seconds.

Making hybrid images

From this research in neuroscience came the hybrid images. The principle is to mix two images, one in low frequencies and the other in high frequencies. We owe this visual innovation to the MIT laboratory and researcher Aude Olivia. You can find her scientific publication at this address.

If you want to have fun making this type of images, the process is quite simple. In Photoshop, import your two images. Put the first one in “Gaussian Blur” mode and pass a “high-pass” filter to the second one. Here is a source file if you want to have fun. Don’t hesitate to send us a link to your creations in the comments.

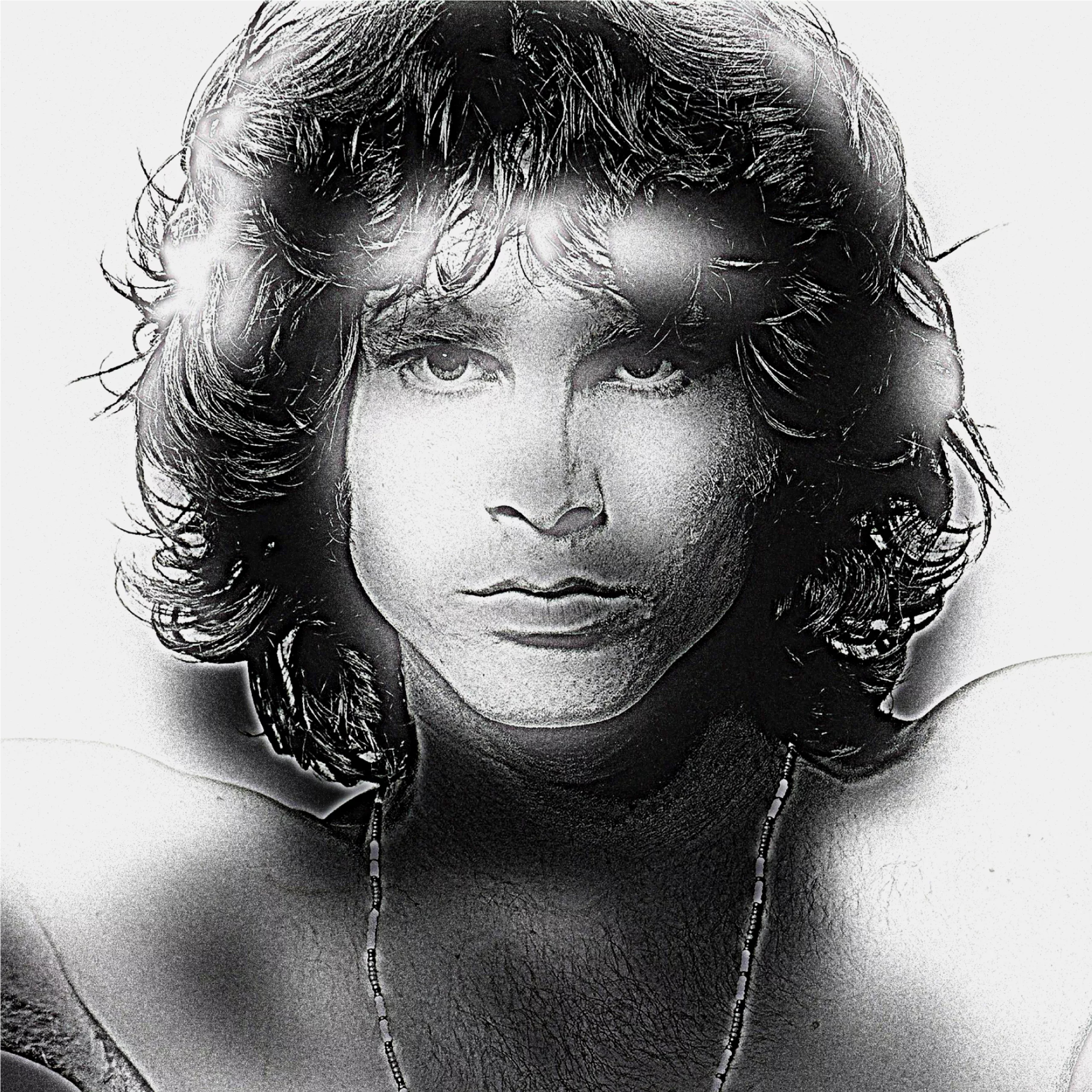

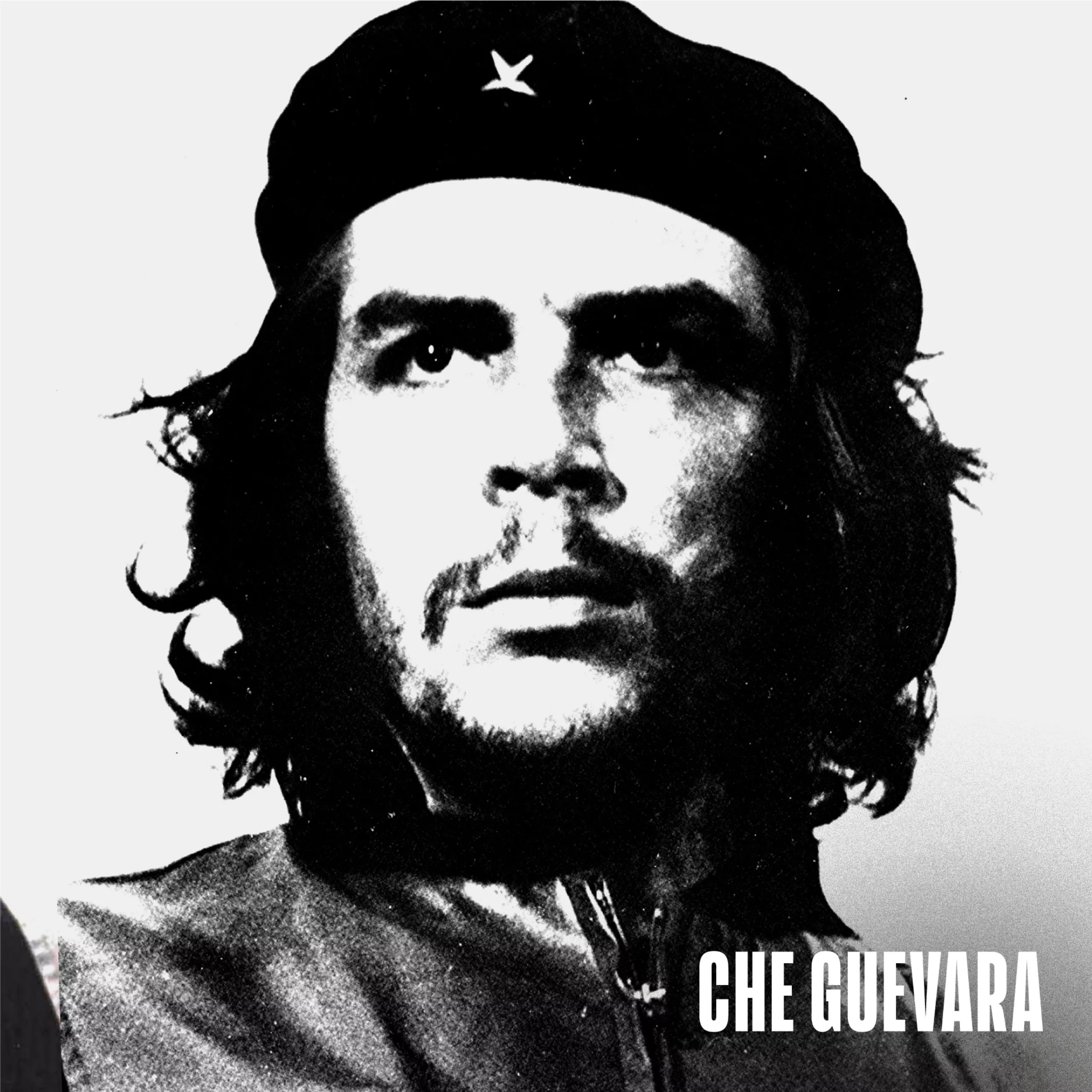

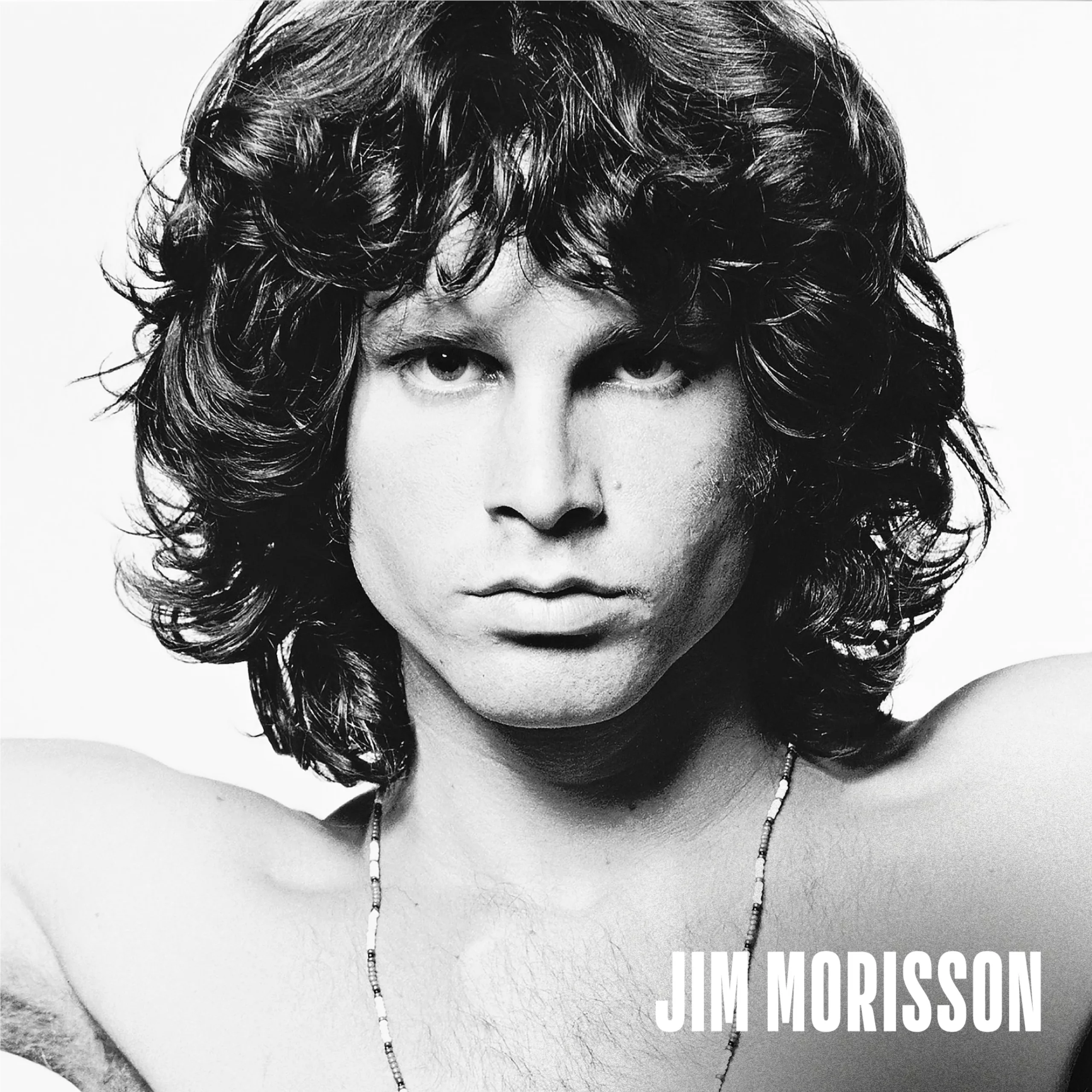

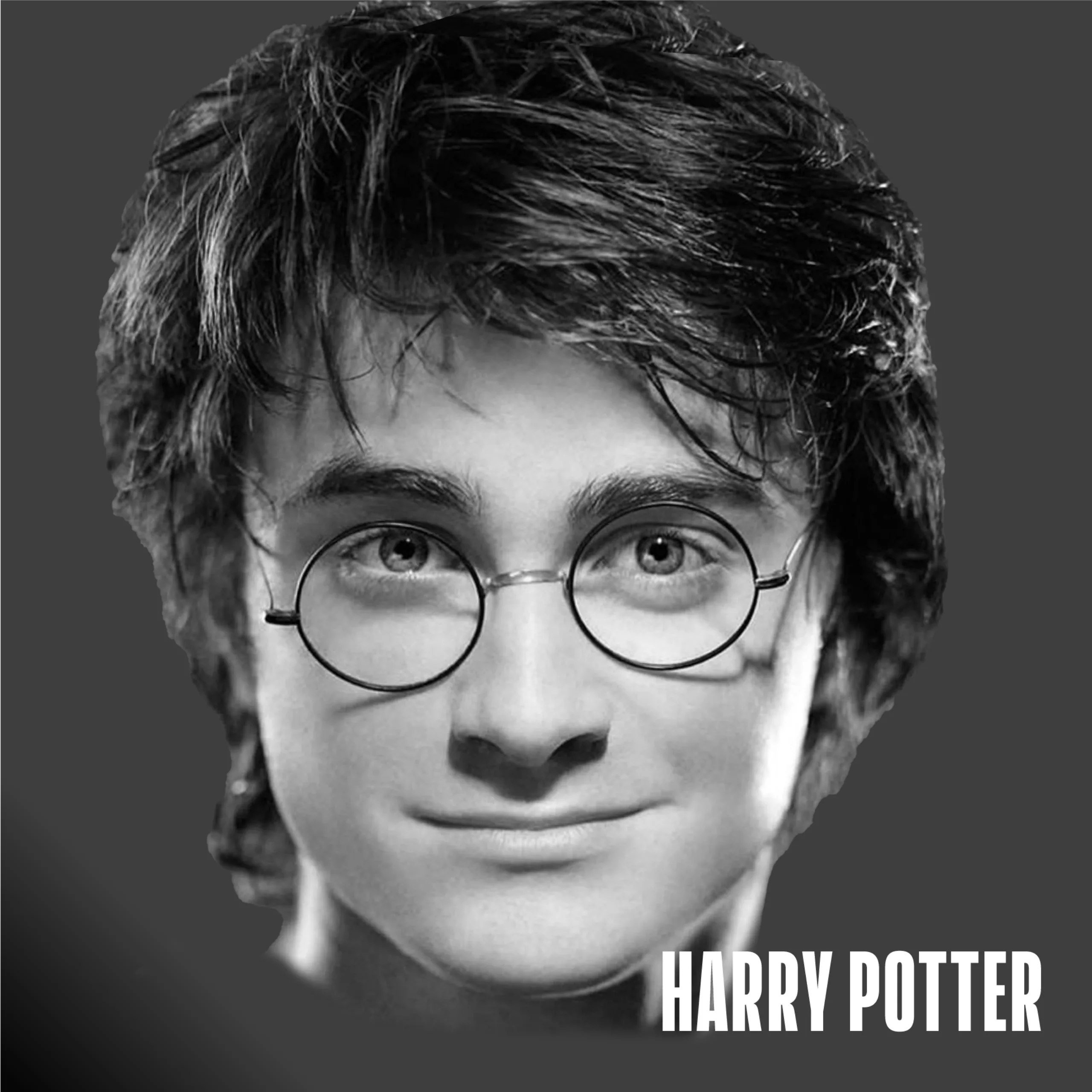

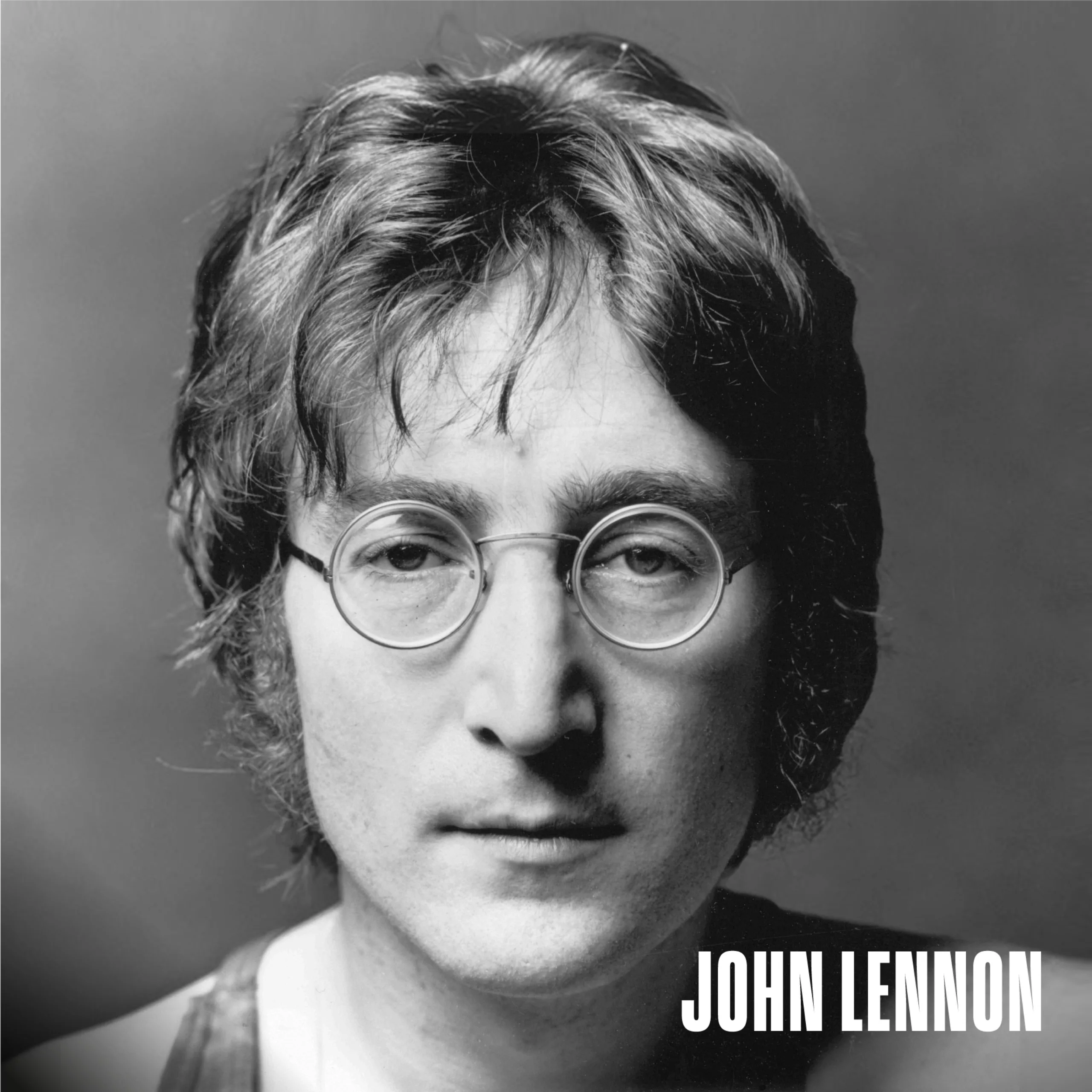

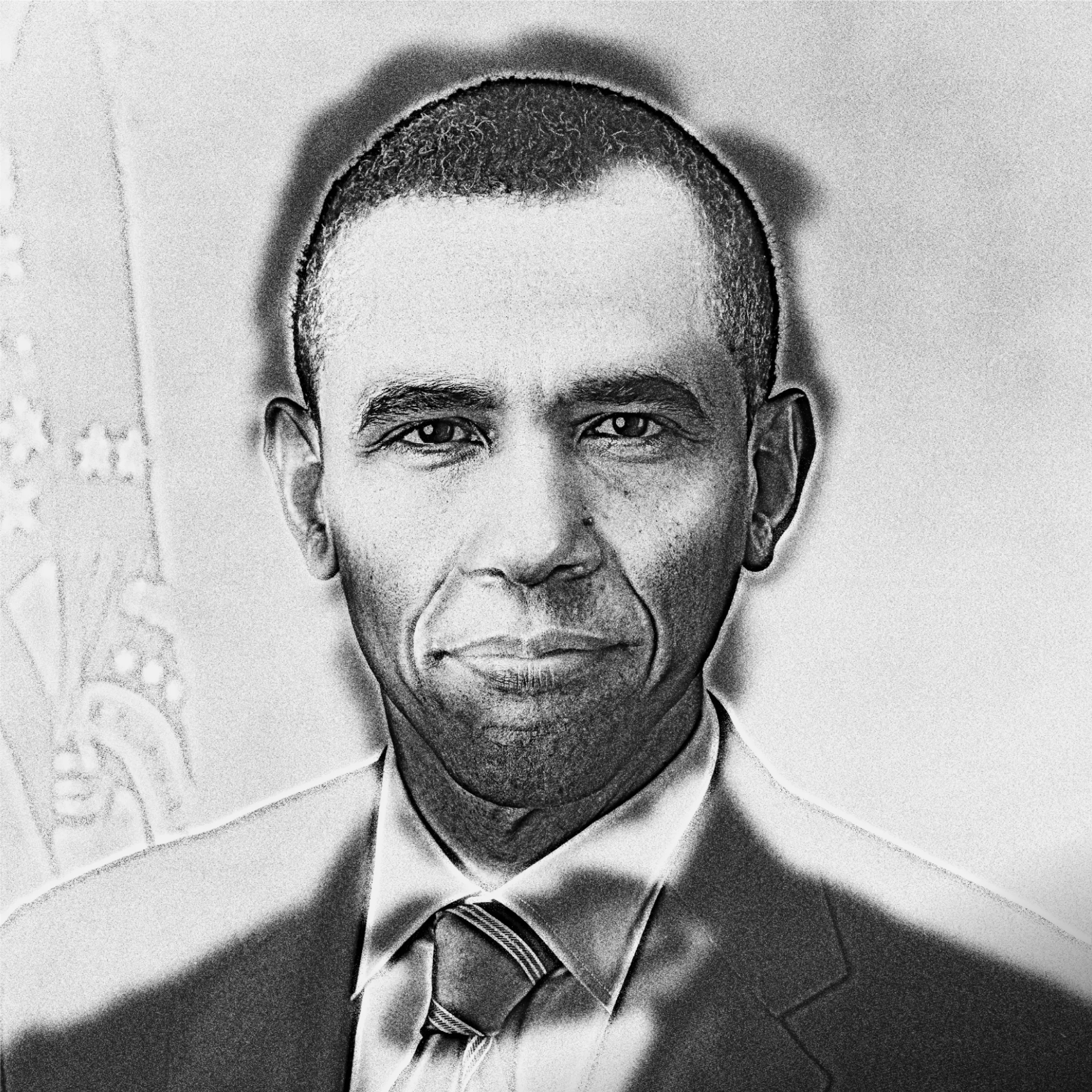

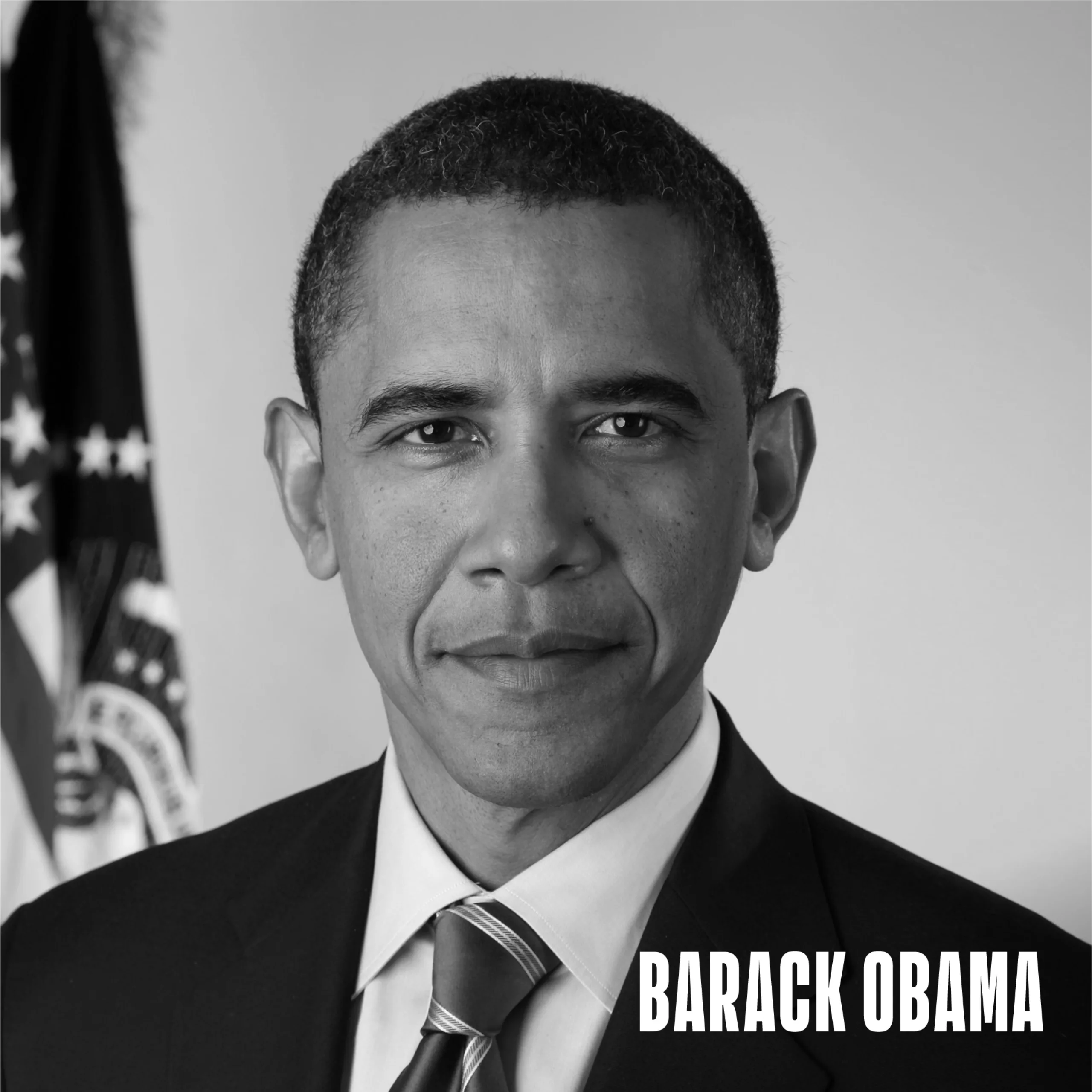

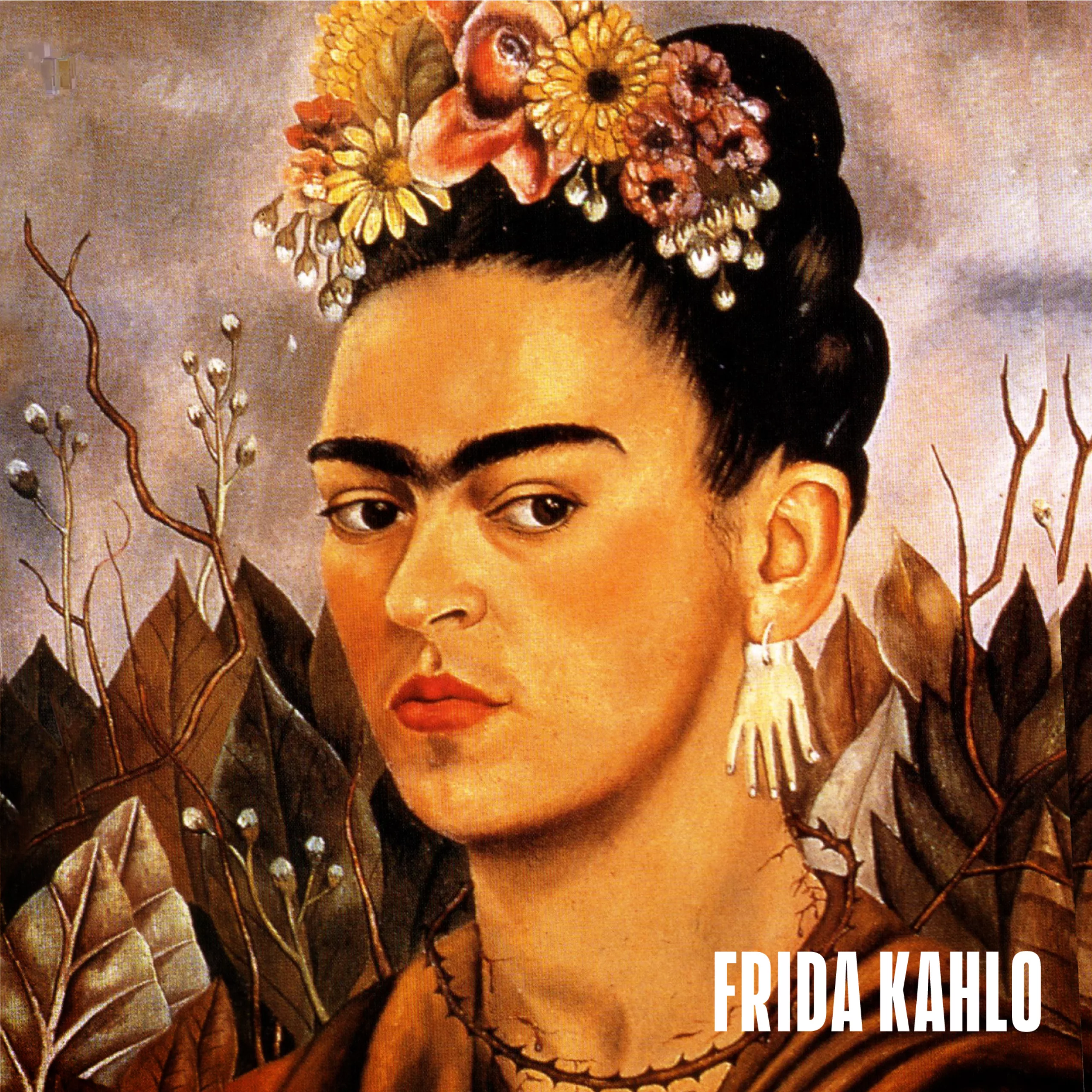

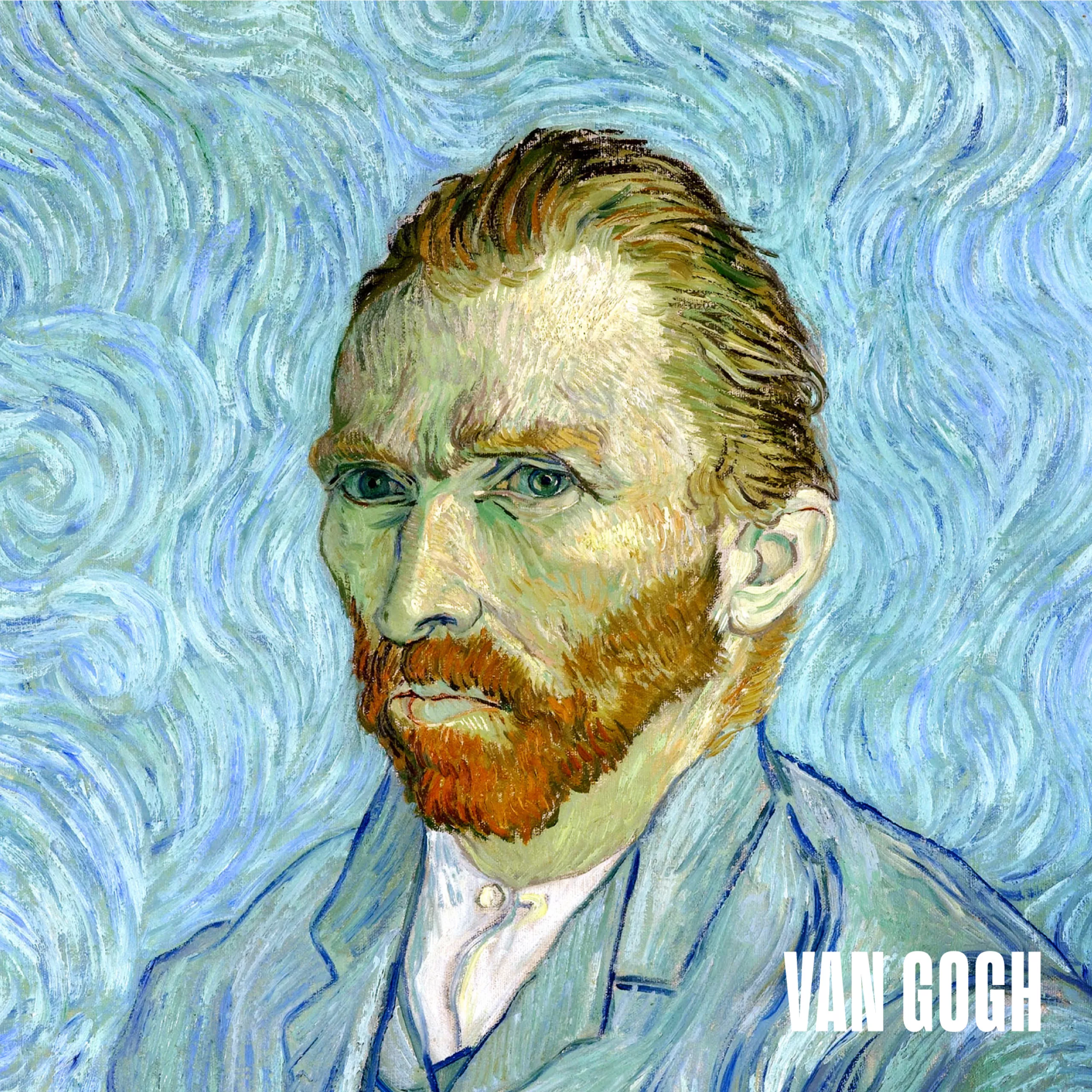

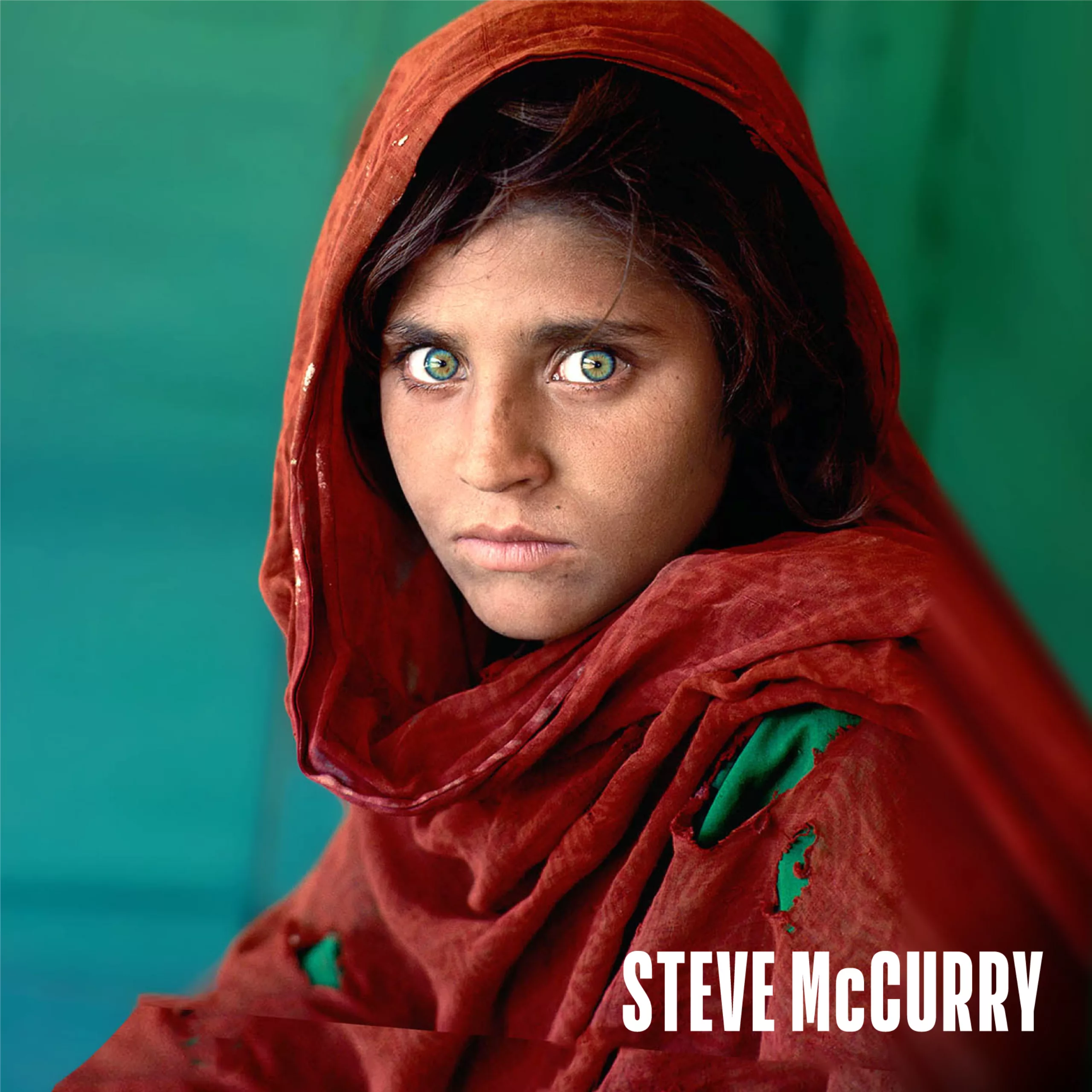

Who’s hiding in these pictures?

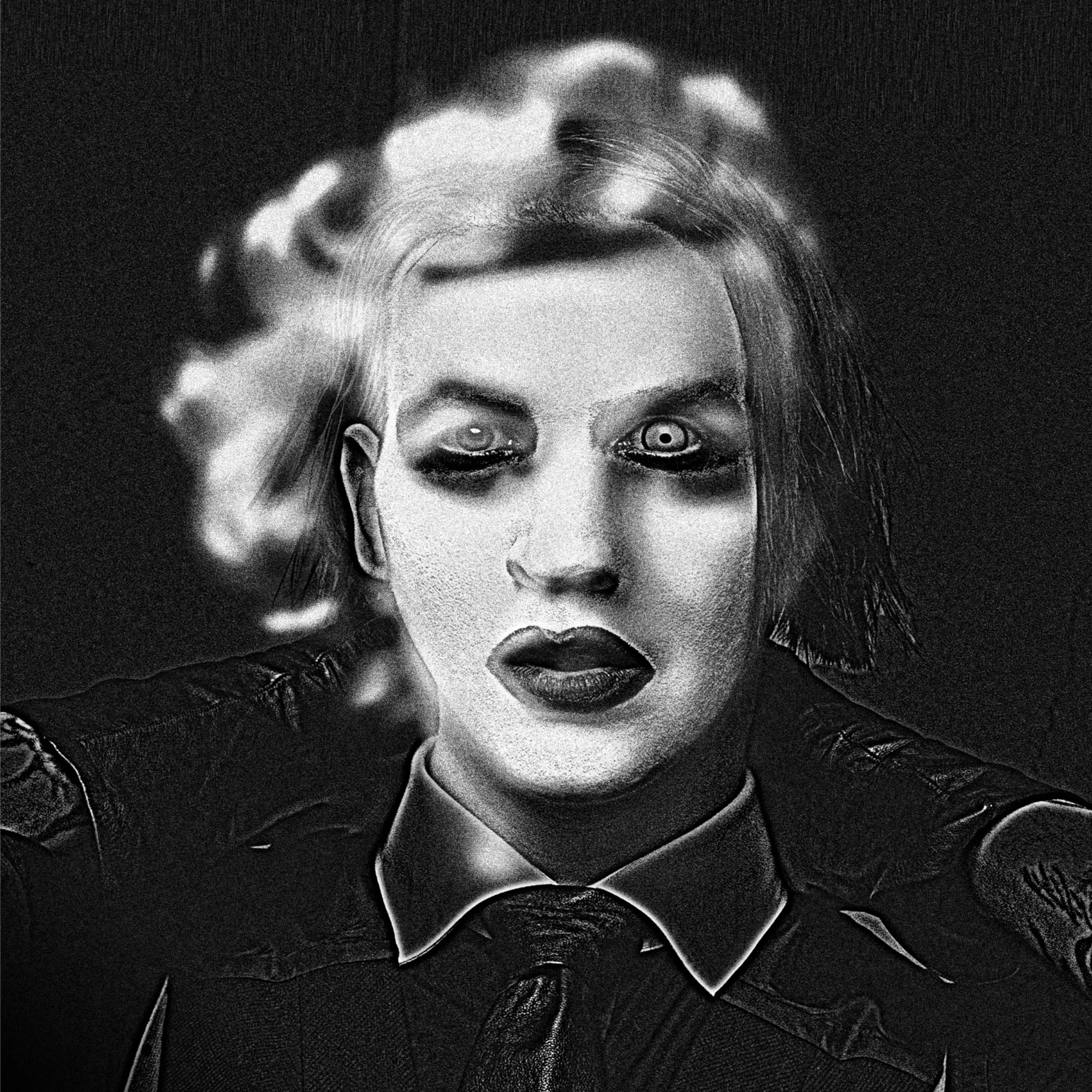

We propose you a little game.

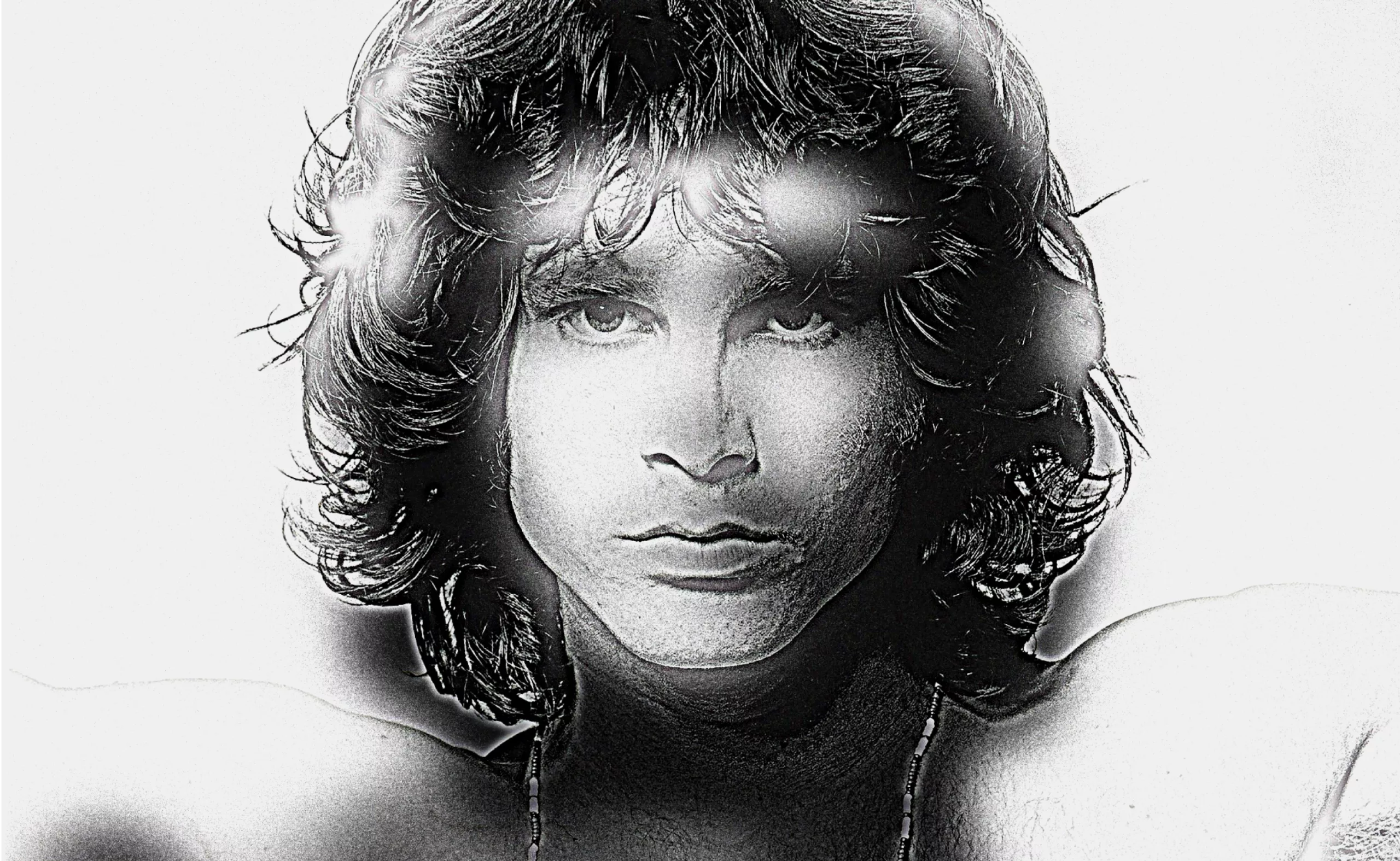

Will you recognize which celebrities are hidden in these pictures?

Feel free to place the screen away from your eyes. You can also squint.

Scroll through the pictures to discover the original images.

More images can be found on the instagram account @Hybrid_images.

Test your available brain time

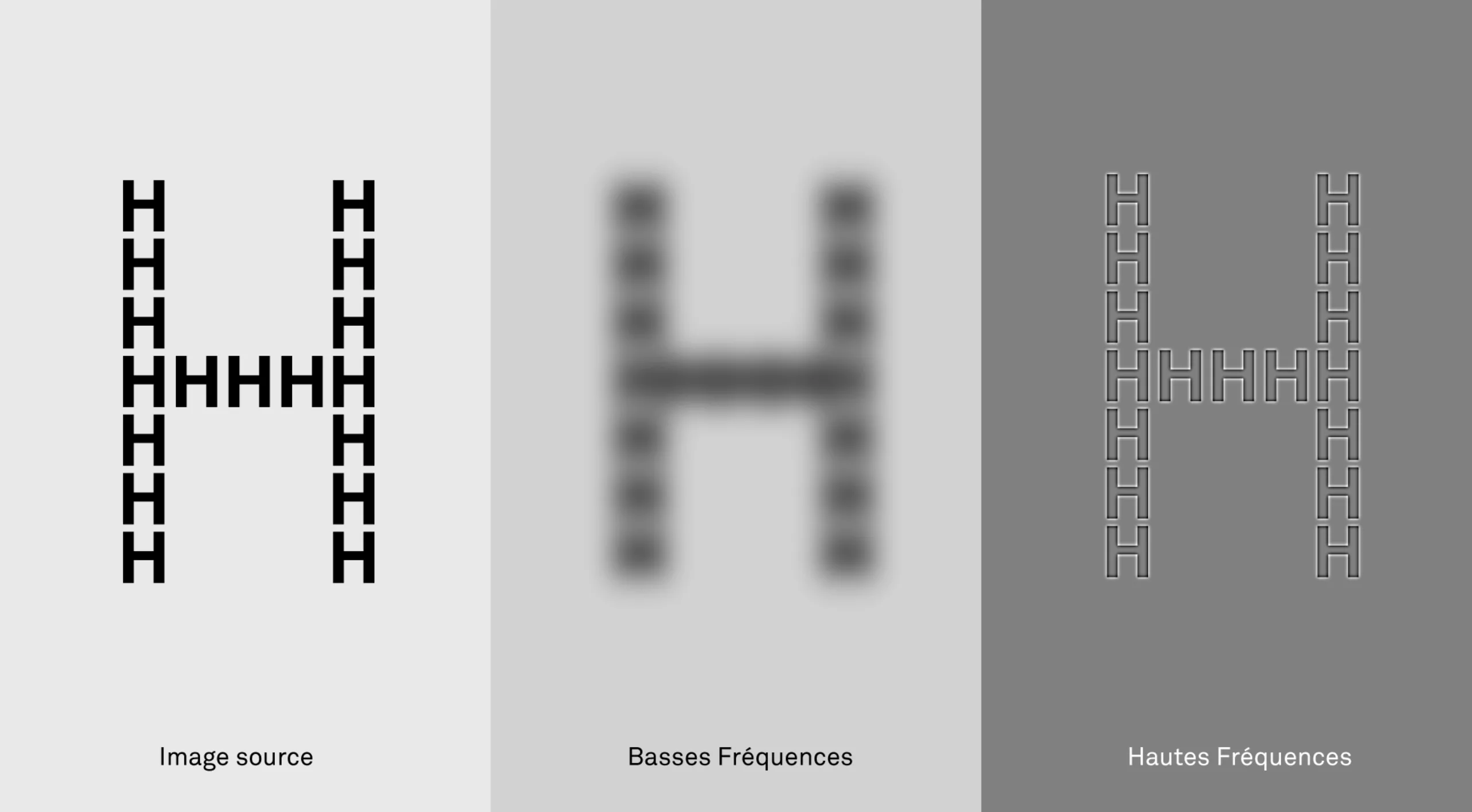

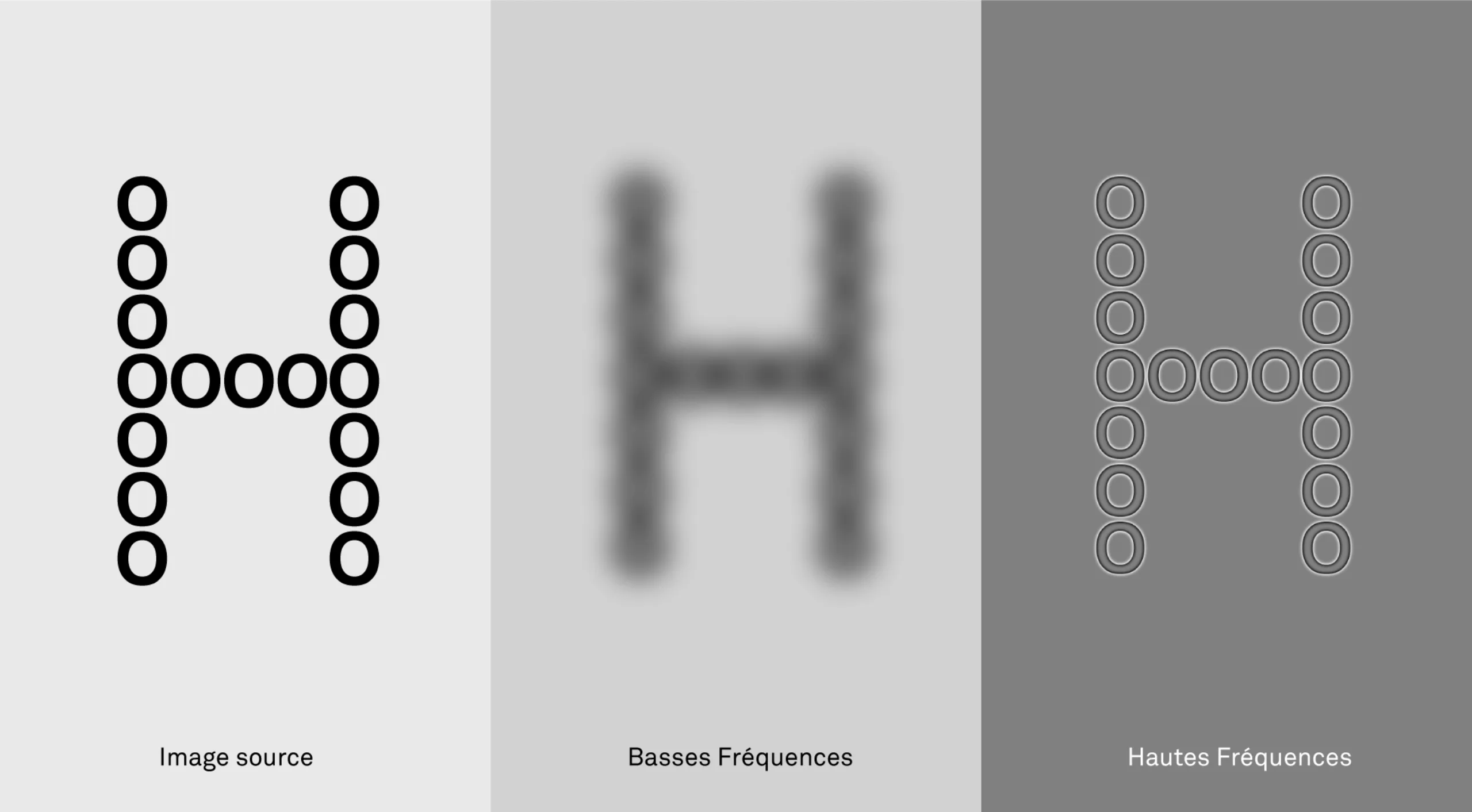

In the example of the tiger cat, we observed the mechanism for recognizing an image by iterations. Let’s put this mechanism into practice with a funny little test. Below, you have two images, an “H” composed of small “H’s” and another “H” composed of small “O’s”. But wait a few more seconds before looking at these images. The goal of the game is to observe what your eyes will do. Pay attention, because everything happens in 0.15 seconds for the first image and a little more time for the second image.

In the first image, the low-frequency stimulus sends a first information to the brain (see: a big H). The second high-frequency stimulus confirms that these are small H’s. High and low frequencies send two pieces of information that will be conceptually “congruent” to the brain.

In the second image, the low-frequency stimulus is the same (cf: a big H), but the high-frequency stimulus sends back “non-congruent” information (cf: small O). The brain will then ask for verification… and hop, the eye will look again to check what is happening. The reading time of the image is doubled, even tripled.

So, first we have a global vision (Cf: low frequencies) then a local vision (Cf: high frequencies). Knowing that we don’t have two eyes for nothing, each eye will specialize for a different mission, always to save time. For most people, it is the right eye that looks at the details, and the left eye that looks at the global picture. Of course each eye has both abilities, the proof, if you close your left eye, you will always see the cat jumping on you.

By replicating this experience under brain imaging, neuroscientists were able to measure which side of the brain was active (or not), and they were able to confirm that the left brain is predisposed to details and the right is more prone to the global. This confirmed the first intuitions acquired in the 1960s, when the first neuroscientists observed the impact of brain accidents on their patients (e.g. So-and-so understood vocabulary, but not puns, etc.). The left hemisphere of the brain is the seat of cold and concrete logic (Cf: high frequencies), while the right hemisphere is more oriented towards imagination and emotions (Cf: low frequencies). Seen through these blurred “H”, all this seems logical!

In the image above, we can see that the process can be used very well with typography. One could easily imagine signage processes playing with this principle. Imagine, from a distance you read an information “A”, then as you get closer the information changes to become “B”.

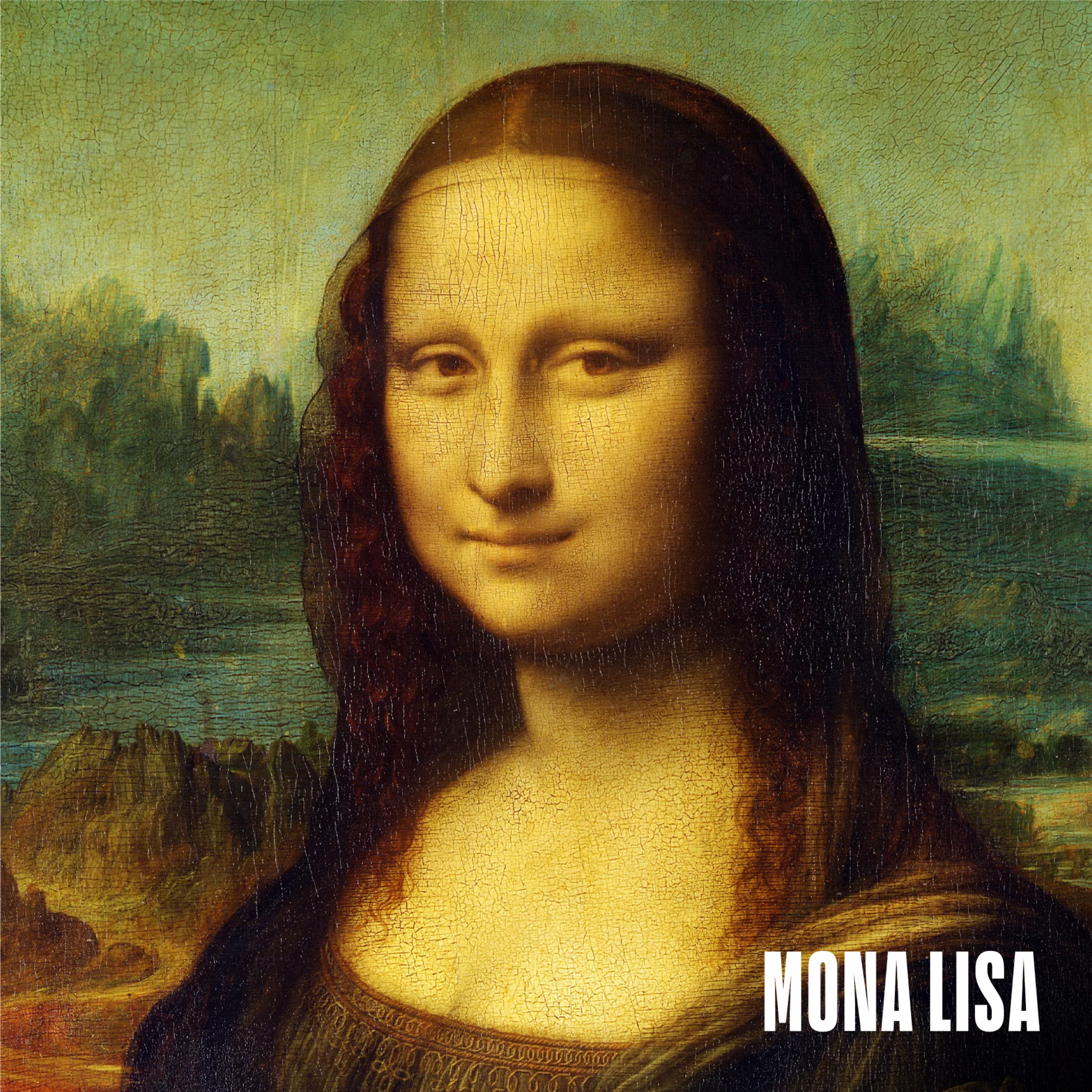

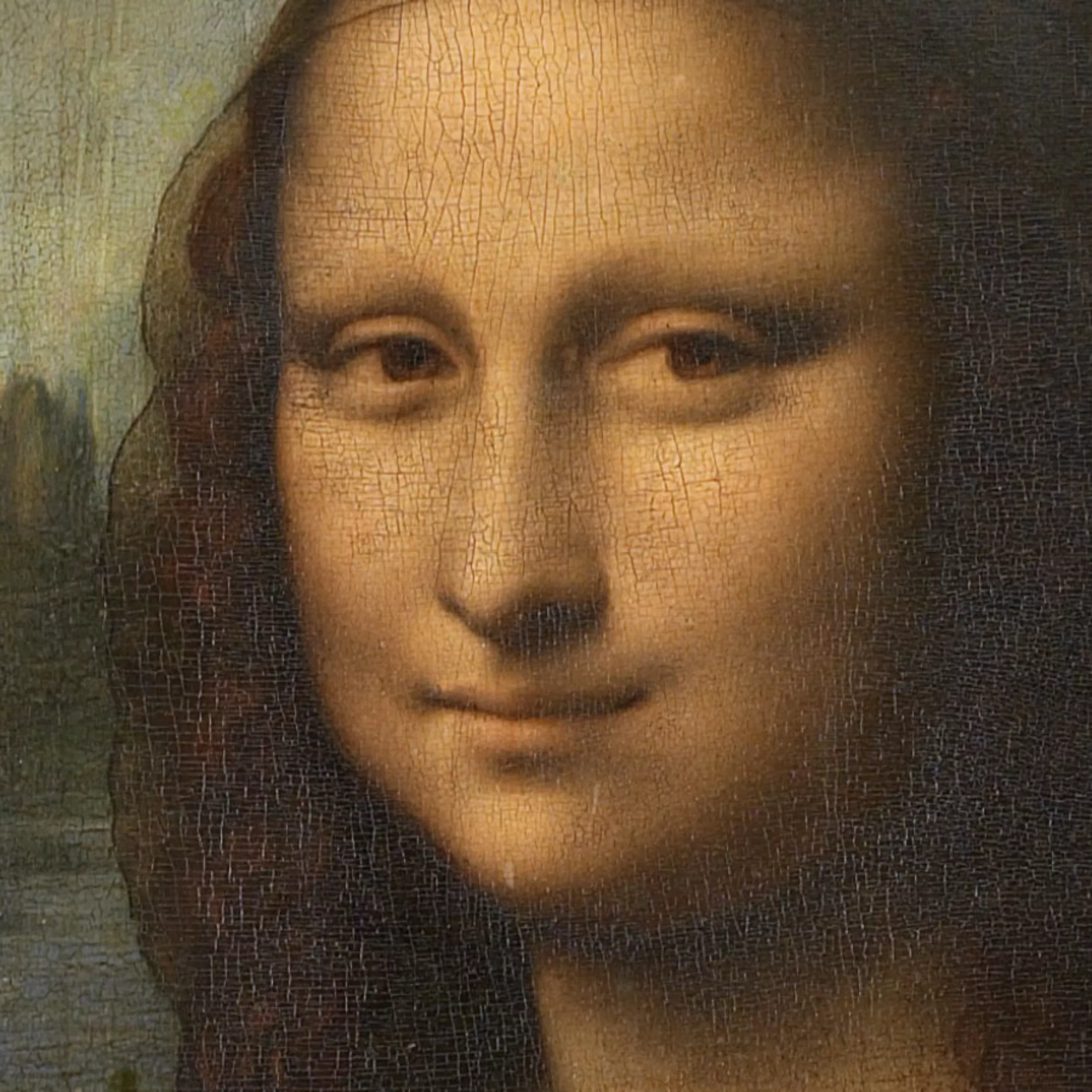

The secret of the Mona Lisa’s smile

The incredible Leonardo da Vinci, without knowing it, had already manipulated this principle of “visuospatial resonance”. Everyone knows the Mona Lisa and her famous smile. Endless expert discussions have focused on this enigma: “Is the Mona Lisa smiling?”.

The Italian maestro used a characteristic Renaissance technique called sfumato. It consists of tiny layers of glaze mixed with subtle pigments. When you look directly at the Mona Lisa’s smile, it seems to disappear. But when you look at the background, then you can see a smile on the Madonna’s face.

These are simply two superimposed images, one “blurred” (low frequency) representing a smile, while the “sharp” image is less smiling. So, when our gaze changes, for example by looking at the small, sharp details in the background, then the perception of the smile changes. The mystery of the Mona Lisa’s smile would have waited 500 years to be unravelled!

The art of double images

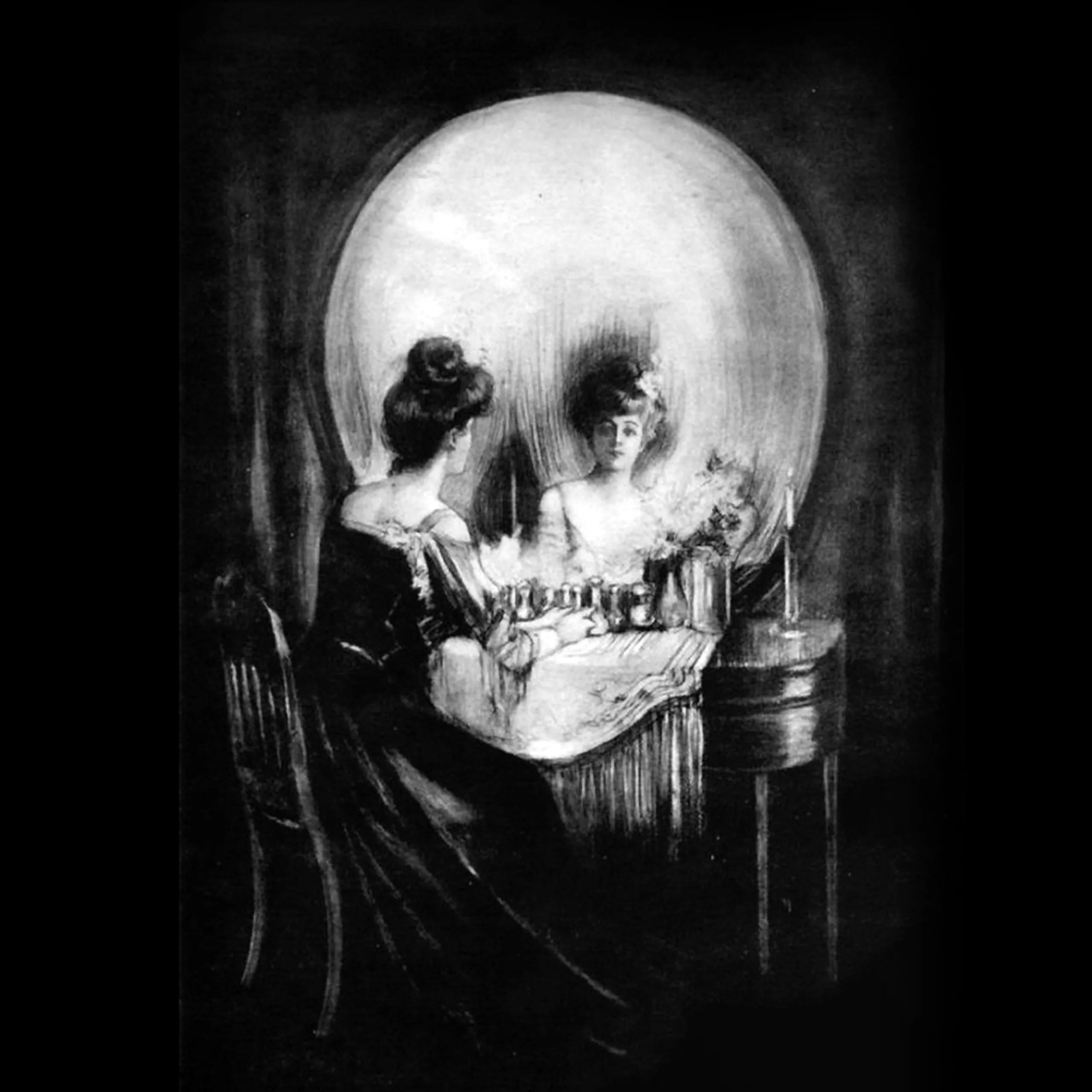

While these hybrid images are the result of recent scientific research, the art of “double images” is older. We are obviously not talking about the notion of the “double meaning” of an image that goes back to time immemorial, but simply about these optical illusions.

In the 1890s, Charles Allan Gilbert produced an impressive work entitled “All is Vanity”. It shows a young woman looking at herself in a mirror, but as she stands back, a vanity (skull) appears. Many years later, Dior resumed this process for the advertising of its perfume “Poison”.

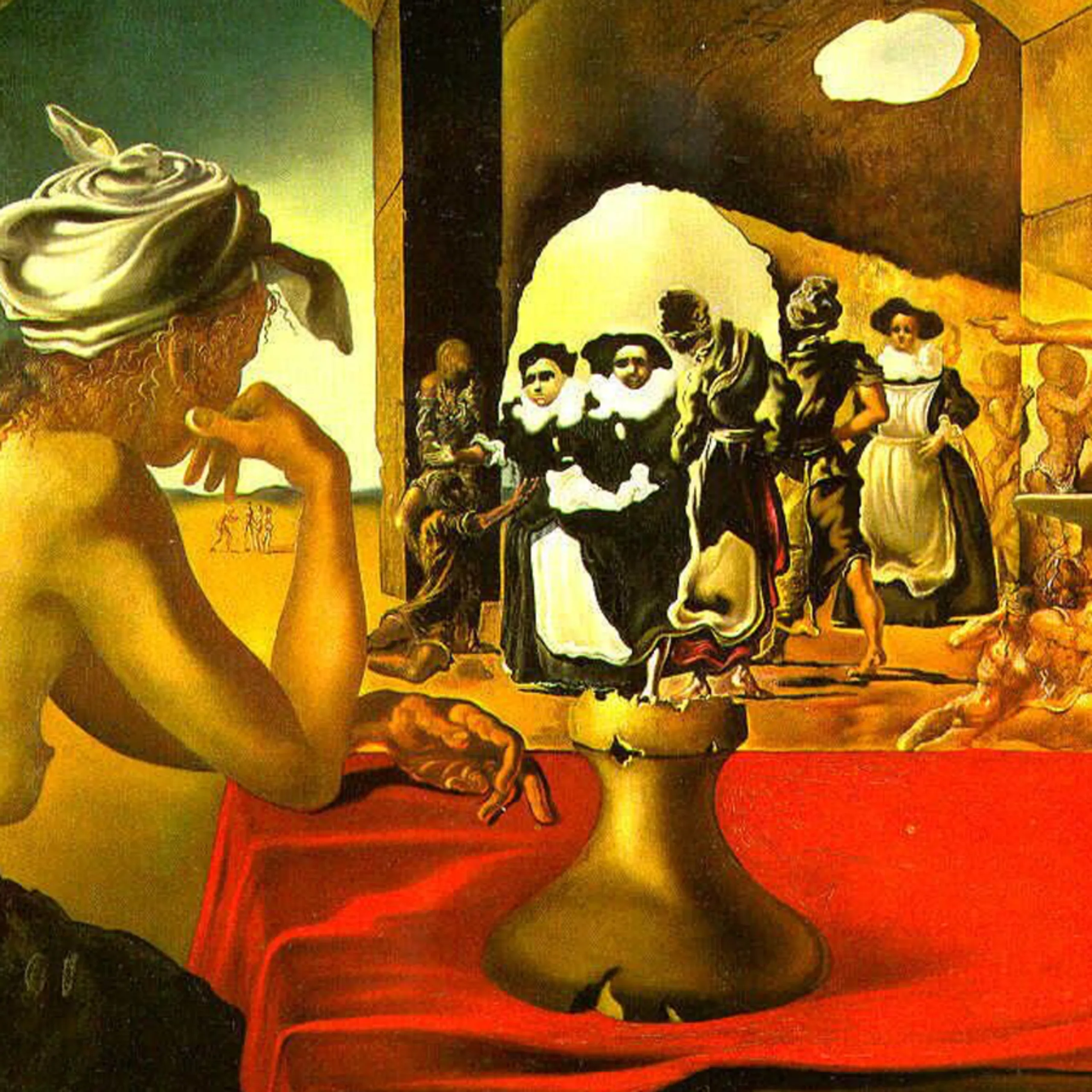

Another famous painting from the 1940’s is Salvador Dali’s “Slave Market”. The bust of Voltaire hides there among a group of characters. Always according to the distance of our gaze, this or that part of the image appears.

An ecology of the gaze?

With these various research projects, straddling art and science, we have a better understanding of how our eyes work. Like any science, this can be used to manipulate images even more efficiently and thus force our eyes to look at them for even longer. Advertising loves this kind of use.

Conversely, these techniques can also be used to produce images that are more economical in “brain time” in a form of cognitive ecology. In an increasingly complex world, it seems to us essential to produce fewer images, but better thought-out images that inform the eye in a benevolent way, and that distribute the information at the right time, in the right place.

Sources :